Everyone is chasing the perfect prompt. The best model. The ideal agent setup.

Meanwhile, one solo developer is running a product used by thousands — writing zero lines of code by hand, handling all customer support himself, and shipping bug fixes while he sleeps.

His name is Naveen. He’s the GM and sole engineer behind Monologue, a smart dictation app that processes over 2 million words per day. I sat down with him to understand how he does it — and walked away with seven AI lessons that apply to anyone doing knowledge work today.

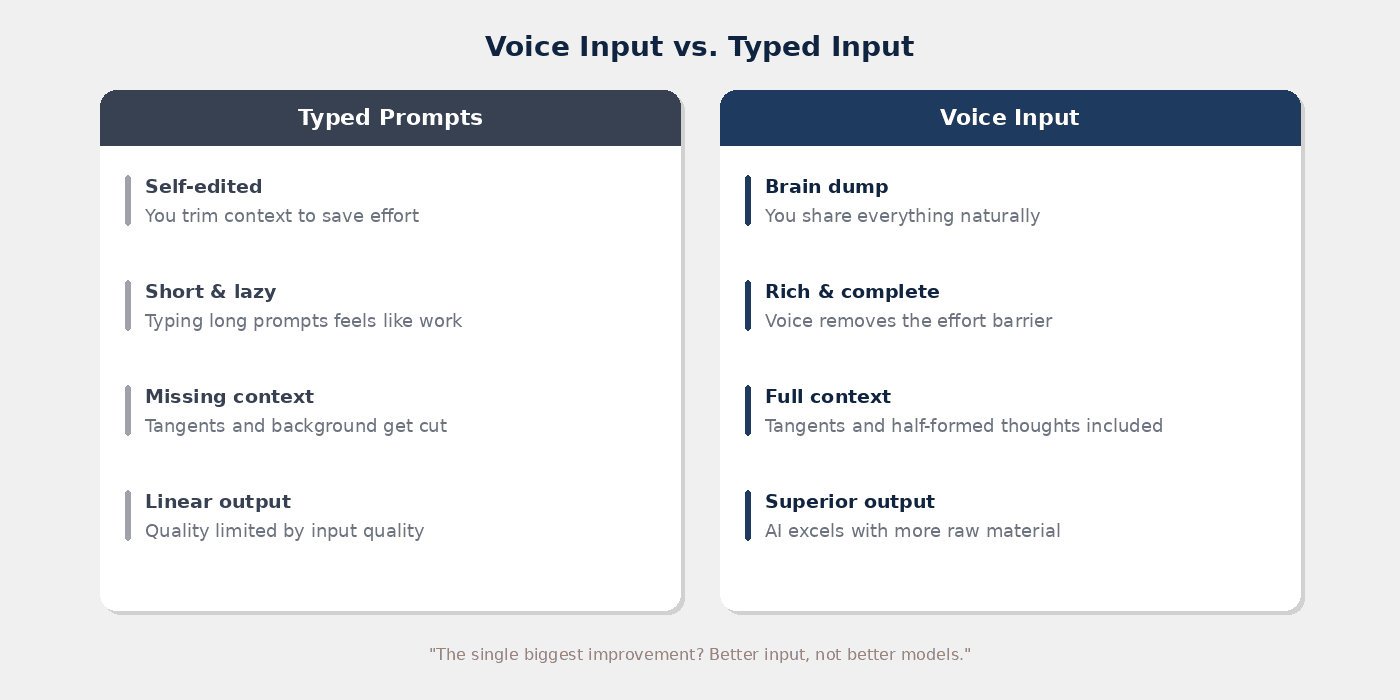

1. Stop Typing, Start Talking

The single biggest thing that will improve your AI results has nothing to do with prompt engineering. It’s switching from typing to voice. This principle aligns with how structured prompting works in Notion AI — better input quality fundamentally changes output quality.

That sounds almost too simple. But here’s the logic: when you type, you self-edit. You trim context. You write shorter, lazier prompts because typing long ones feels like work.

When you talk, you brain dump. You give the AI everything — the context, the tangents, the half-formed thoughts. And AI is brilliant at structuring raw, messy input.

Monologue’s top user dictates 450,000 words per month. They’ve essentially stopped typing altogether — 99% voice. And their AI output quality has gone through the roof. Not because the models got better, but because the input did.

Pro Tip: Don’t start by replacing all your typing. Start with one thing — your next ChatGPT or Claude conversation. Dictate instead of type. Once you feel the difference in output quality, you’ll naturally expand from there.

2. Treat Customer Support As Product

How does one person provide fast, thoughtful support to thousands of users? By treating support not as a cost centre, but as a core part of the product experience.

Naveen uses AI coding agents (Codex) for his development work. Each task runs for 5–10 minutes. Instead of scrolling X or checking YouTube while waiting, he switches to a support thread.

He’s built a system where he can pull in active Intercom conversations with a single command. The AI already has access to his codebase, his database, and his help centre — so it can draft responses, identify edge cases, and suggest fixes in seconds.

The result: most users get a reply within 30 minutes. From a one-person team.

The lesson for knowledge workers? Use the gaps. Every time an AI agent is working on something, you have a window. The question isn’t whether you have time — it’s what you do with the 5-minute pockets that AI creates throughout your day.

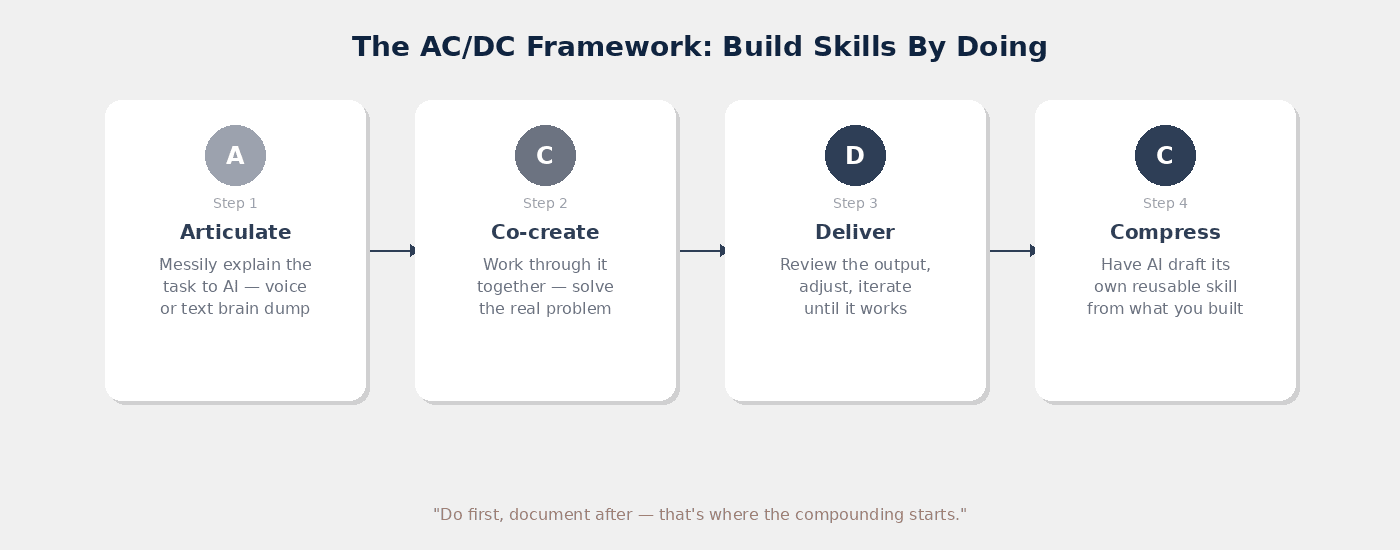

3. Build Skills By Doing, Not By Planning

Everyone wants to start with the perfect system prompt. The perfectly written skill file. The flawless agent instructions.

That’s backwards. This mirrors the Notion AI Skills philosophy — skills emerge from real workflows, not theoretical frameworks.

Naveen’s approach: create an empty folder, start a thread, and messily work through the task with the AI. Need to pull Intercom conversations? Ask the AI to figure it out. It walks you through authentication, API access, local token storage. You solve the problem together.

Then — and only then — you ask the AI to compress what you both learned into a reusable skill.

This maps to a framework we use with our clients: AC/DC. First, you messily explain the task to AI. Then it executes. You review. And finally, you have it draft its own instructions. That last step is where most people never get to — but it’s where the compounding starts.

Pro Tip: Resist the urge to download pre-made skill templates. Skills built from your own workflows will always outperform generic ones — because they encode your context, edge cases, and preferences.

4. Teach Your AI To Compound

Static instructions decay. If your AI’s knowledge doesn’t grow, it gets harder to work with over time — not easier.

Naveen’s solution: after every customer support session, he asks the AI to review all of the day’s conversations and update its own knowledge base. The help centre articles improve. The skill files evolve. The AI gets better at handling similar tickets next time.

This is the principle of compound engineering — the idea that your AI should learn from every interaction, not just execute the same playbook repeatedly.

You don’t need a fully autonomous learning loop for this. Even a simple habit works: every 10th sentence, tell your AI to remember what you just discussed. “Make sure to update your docs with this.” “Add this to your skill file.” It takes five seconds when you’re using voice — and the payoff compounds daily.

The difference between a 2x productivity gain and a 10x one? The 2x comes from using AI. The 10x comes from letting it learn.

5. Let AI Do 80% — Then Review The 20% That Matters

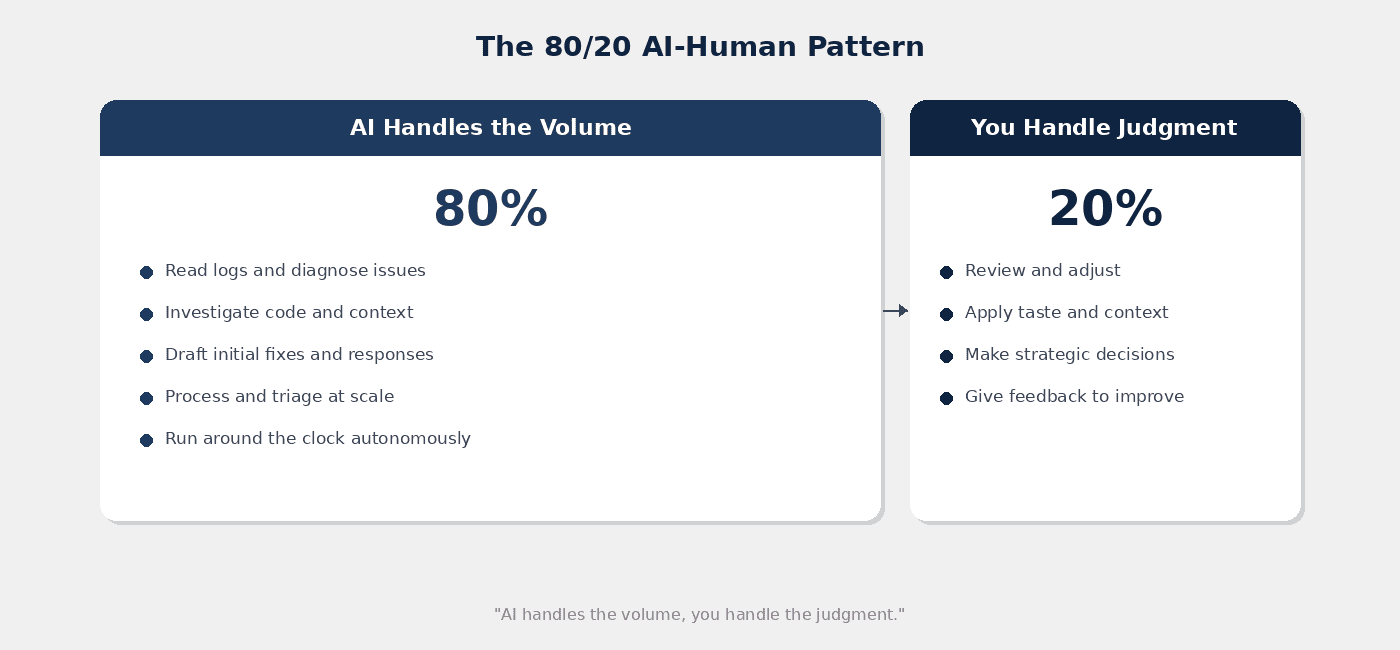

Naveen built an AI agent called Cooper (named after the Interstellar character). Cooper is connected to Sentry (error tracking), Linear (project management), and the full GitHub codebase. This autonomous execution model is what custom agents in Notion are designed to achieve — AI handles volume, you handle judgment.

When a crash or error occurs, Cooper automatically reads the logs, analyses the code, and creates a pull request with a fix. This happens around the clock — including while Naveen sleeps.

Every morning, Naveen reviews what Cooper produced overnight. Most of the time, he doesn’t merge the code as-is. He adjusts, gives feedback, cleans things up. But the 80% that Cooper handled — the diagnosis, the investigation, the initial fix — saves hours.

This is the pattern to internalise: AI handles the volume, you handle the judgment. The same principle applies whether you’re triaging emails, reviewing reports, or processing applications. Let the AI do the first pass. Your job is the final 20% — the taste, the context, the decisions that require human understanding. For teams scaling this approach, Notion Consulting services help design workflows where humans and AI work this way together.

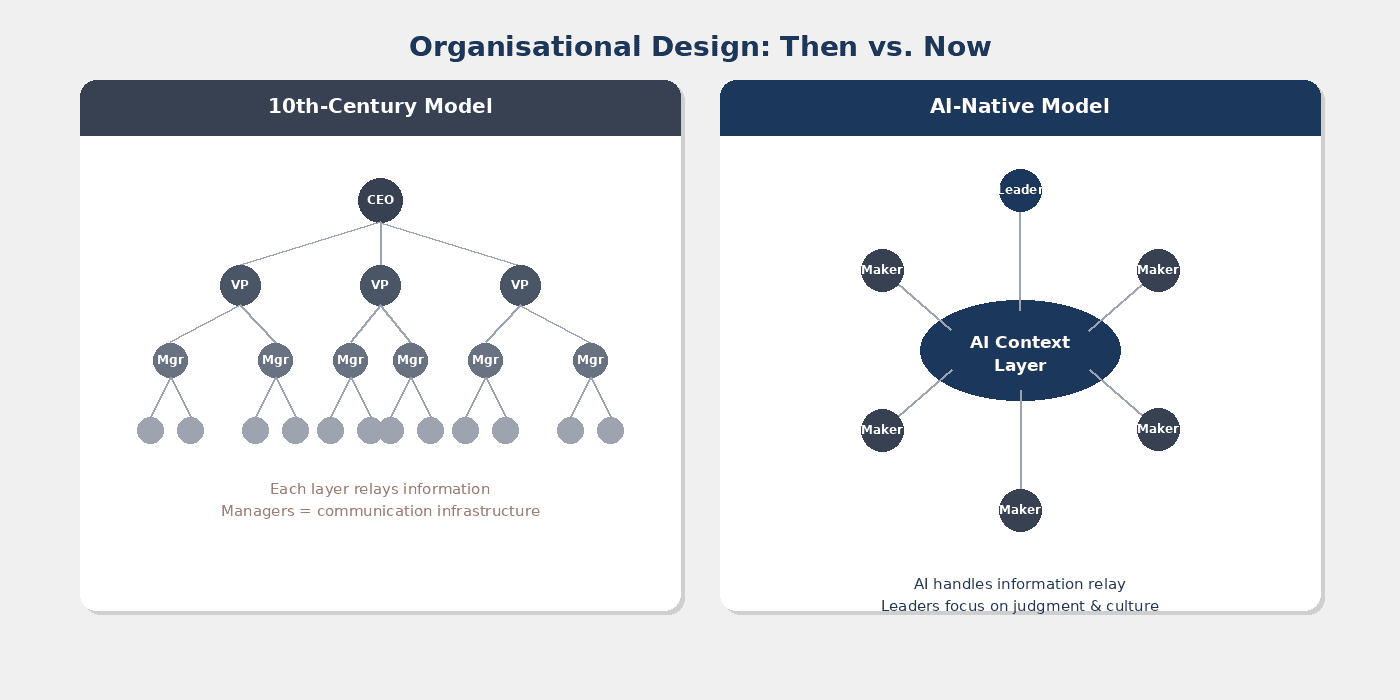

6. Your Org Chart Might Be From The 10th Century

Here’s a thought that will change how you think about team structure.

Naveen referenced a blog post by Jack Dorsey (Block / Square) arguing that modern organisational hierarchies are modelled on 10th-century military structures. Back then, there were no telephones. No email. No instant messaging. The only way to coordinate large groups was through a chain of command — each layer existed primarily to relay information. Understanding this shift is central to what’s called The Judgment Economy — the idea that AI handles information relay, freeing managers to focus on judgment and culture.

Managers were, fundamentally, communication infrastructure.

Now ask yourself: if AI provides a rich, always-available context layer — one that can summarise, distribute, and even act on information — do you still need the same structure?

This doesn’t mean managers become irrelevant. It means their role shifts from information routing to judgment, culture, and strategic direction. And it means smaller teams — or even solo operators — can achieve what previously required a department.

The question for every leader: which layers of your organisation exist because of communication bottlenecks that AI has already solved?

7. Play Like It’s 2007

Naveen compares the current AI moment to the iPhone launch in 2007. That 2007–2011 window was a golden period — a flood of creative apps, new business models, entirely new categories.

We’re in the same kind of window right now. The rules haven’t been written yet. No one has figured out the “right” way to work with AI — and anyone who claims otherwise is either delusional or selling something.

At Every (the company Naveen works with), the culture is built around a single word: play. Not in the trivial sense. In the childlike sense — where there’s no concept of time, where you explore without fear of failure, where every experiment teaches you something even when it doesn’t work.

This might be the most important lesson of all. You don’t need to have a strategy for AI. You need to have a practice. Try things. Break things. Build things that go nowhere. Because the people who will thrive in this era aren’t the ones who waited for the playbook — they’re the ones who wrote it by experimenting. If you’re building AI workflows in Notion, that same principle applies — multi-agent orchestration comes from doing, not planning.

Join Our Community & Keep Learning

If these lessons resonate with you, join over 40,000 ambitious professionals who are levelling up their Notion and AI skills. Get our Notion newsletter — it includes 41 free Notion resources plus weekly insights on building AI-native workflows.

The Shift At A Glance

| Area | Traditional Approach | AI-Native Approach |

|---|---|---|

| AI Input | Typed prompts, carefully edited | Voice brain dumps, raw and complete |

| Support | Dedicated team or outsourced | AI-assisted, handled in idle gaps |

| Skill Building | Write instructions upfront, then execute | Do the work first, compress into skills after |

| AI Knowledge | Static prompts and templates | Dynamic skills that learn and update |

| Execution | Human does 100% of the work | AI does 80%, human reviews 20% |

| Org Design | Hierarchies built for information relay | Flat structures built for judgment |

| Mindset | Optimise and plan before acting | Experiment, fail fast, compound learnings |

Frequently Asked Questions

Why Is Voice Dictation Better Than Typing For AI?

When you type, you naturally self-edit and cut context to save effort. Voice lets you brain dump everything — tangents, background, half-formed ideas included. AI models perform significantly better with more context, so the quality gap between typed and dictated prompts is substantial. Monologue’s power users report that switching to voice was the single biggest improvement to their AI output. This is exactly why the Notion AI Hub emphasizes feeding AI rich, contextual input.

Can You Really Run A Product As A One-Person Team?

Yes — with the right systems. Naveen runs Monologue (2 million+ words processed daily) entirely solo, handling engineering, customer support, and product development. The key isn’t working more hours. It’s building AI systems that handle the volume work while you focus on judgment calls, quality review, and strategic decisions.

What’s The Best Way To Create AI Skills Or Instructions?

Don’t write them upfront. Work through the task messily with the AI first — solve the real problem together. Once you’ve both figured out the workflow, ask the AI to compress everything into a reusable skill. This “do first, document after” approach consistently outperforms pre-written templates because it captures your actual edge cases and preferences. Learn more in the complete Notion AI Skills guide.

How Do AI Agents Handle Code Fixes Autonomously?

Naveen’s agent (Cooper) connects to error tracking (Sentry), project management (Linear), and the full codebase (GitHub). When an error occurs, Cooper analyses the logs, identifies the issue, and creates a pull request with a proposed fix — all autonomously. The human reviews each morning, adjusts where needed, and merges. It’s the 80/20 principle applied to software maintenance. For a deep dive into building these systems, see how Notion uses AI agents internally.

Do AI-Native Organisations Still Need Managers?

The role changes rather than disappears. Traditional management hierarchies were built to solve communication bottlenecks. AI removes much of that bottleneck. Managers in AI-native organisations focus less on information routing and more on judgment, culture, and strategic direction — while smaller teams achieve what previously required entire departments. This transformation is covered in depth in The Judgment Economy essay.