The better you are at your job, the harder it is to hand that job to AI.

That sounds backwards. It is not.

The more experienced someone is, the more their expertise has compressed into intuition.

They do not think through the steps anymore. They just know. The new hire takes forty-five minutes to route the morning schedule. The veteran does it in ten.

The veteran is not faster at following rules. The rules have become reflexes. And reflexes are invisible — to colleagues, to systems, and to AI.

Most expertise lives in a format machines cannot read.

The AI conversation right now is about capability. Better models. Faster inference. Bigger context windows.

None of that matters if the knowledge AI needs to act on is locked inside someone’s head.

The bottleneck is not intelligence. It is legibility.

Let me show you what this looks like.

A field services company. Forty technicians, forty jobs, one metro area. The owner had built a Notion workspace. Databases for jobs, technicians, customers. Solid structure.

He wanted AI to handle dispatch.

The AI scheduled a new hire solo on day three. It put a different technician on his most important relationship account. It prioritised drive time and ignored certifications.

The system looked complete. The output was useless.

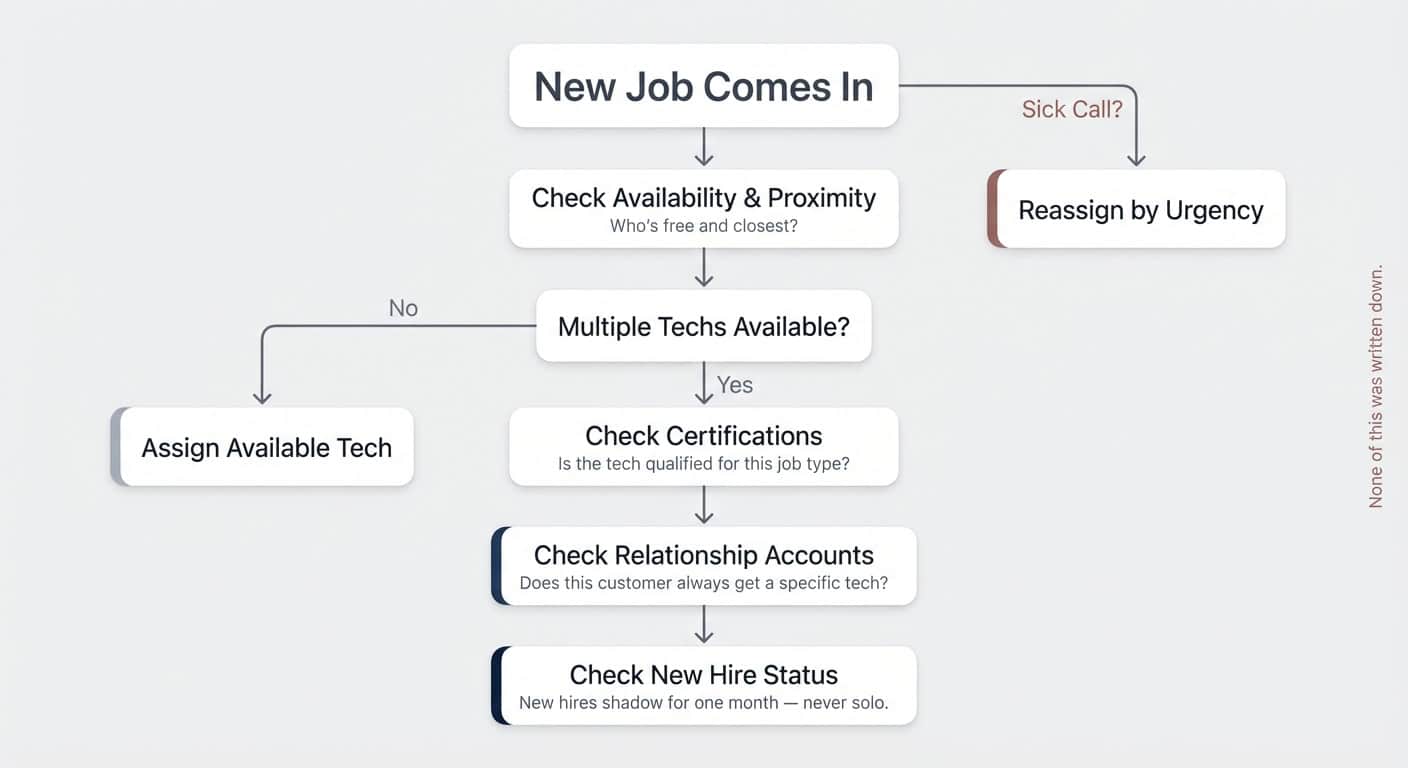

The extraction conversation starts simple.

How do you decide which tech gets which job?

Availability and proximity.

What if two are equally close?

Certifications.

What if both are certified?

Relationship accounts — some customers always get the same person.

What about new hires?

They shadow for a month.

What if someone calls in sick?

We reassign based on urgency.

That is not one rule. That is a decision tree. Geography matters, but certifications override geography. Relationships override certifications. New hires have restrictions. Sick calls trigger a different logic entirely.

None of it was written down. The owner had been pattern-matching daily for years. He could execute it perfectly.

He could not articulate it.

The Trap Between Knowing and Telling

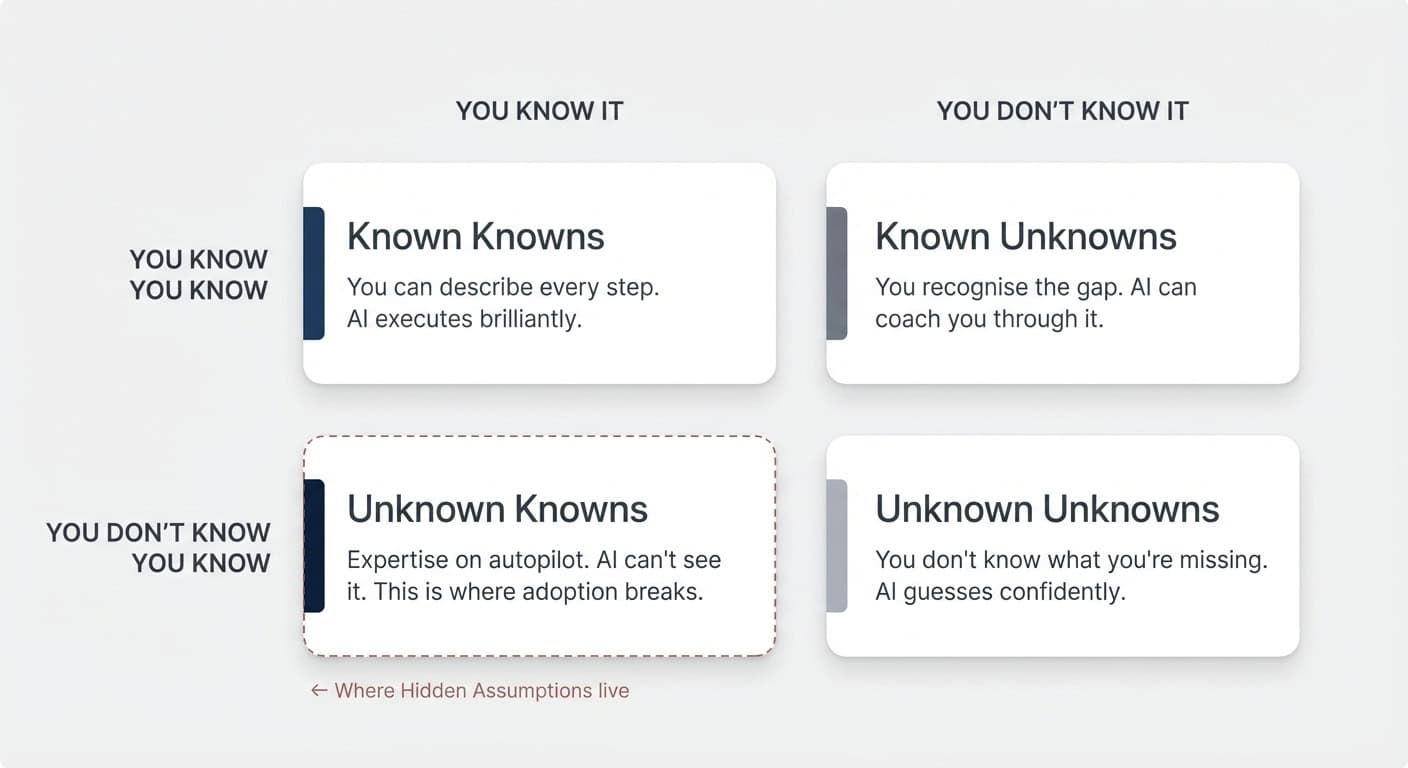

All knowledge sits somewhere in a two-by-two. Most people know three of the four quadrants. The fourth is where AI adoption breaks.

Known Knowns. You understand exactly how a task works and can describe every step. “Pull revenue from this dashboard. Review the last four weekly syncs. Extract blockers and wins. Executive summary first, then metrics, then narrative.” Give AI precise instructions like this, and it performs brilliantly.

Known Unknowns. You recognise you lack expertise. “I have never written a board report. I do not know what good looks like.” AI can coach you through it. You inspect the output, inject your judgment, iterate. Slower, but functional.

Unknown Unknowns. You do not realise what you do not know. “Create a report for the board.” The AI produces something polished. It misses context you never thought to provide, applies assumptions you never validated, and delivers output that ranges from mediocre to wrong.

Most people blame AI failure on this quadrant. The user did not know enough. The prompt was bad. The model hallucinated.

That is not what we see.

Unknown Knowns. You have the expertise — you just do not realise it needs to be articulated. The knowledge runs on autopilot. It feels like instinct, not information. You would never think to write it down because it does not feel like something you know. It feels like something you do.

This is where Hidden Assumptions live. And this is where AI adoption actually breaks.

The dispatch owner knew his rules.

Certifications override proximity.

Relationship accounts get consistent techs.

New hires shadow.

He had never been forced to articulate them. The knowledge was tacit, embedded in years of pattern-matching, invisible even to himself.

He was not operating in unknown unknowns.

He was operating in unknown knowns — expertise he possessed but had never made legible.

Not because he lacked it. Because it never occurred to him that it needed to be externalised.

We see this pattern play out in some many variations right now.

Turns out, AI isn’t just about slapping the latest frontier model onto an existing process and hoping for the best.

Hidden Assumptions: The Invisible Friction

Ask three people on the same team how a task should be done. You will get four different answers.

Not dysfunction. Just the natural state of organisations.

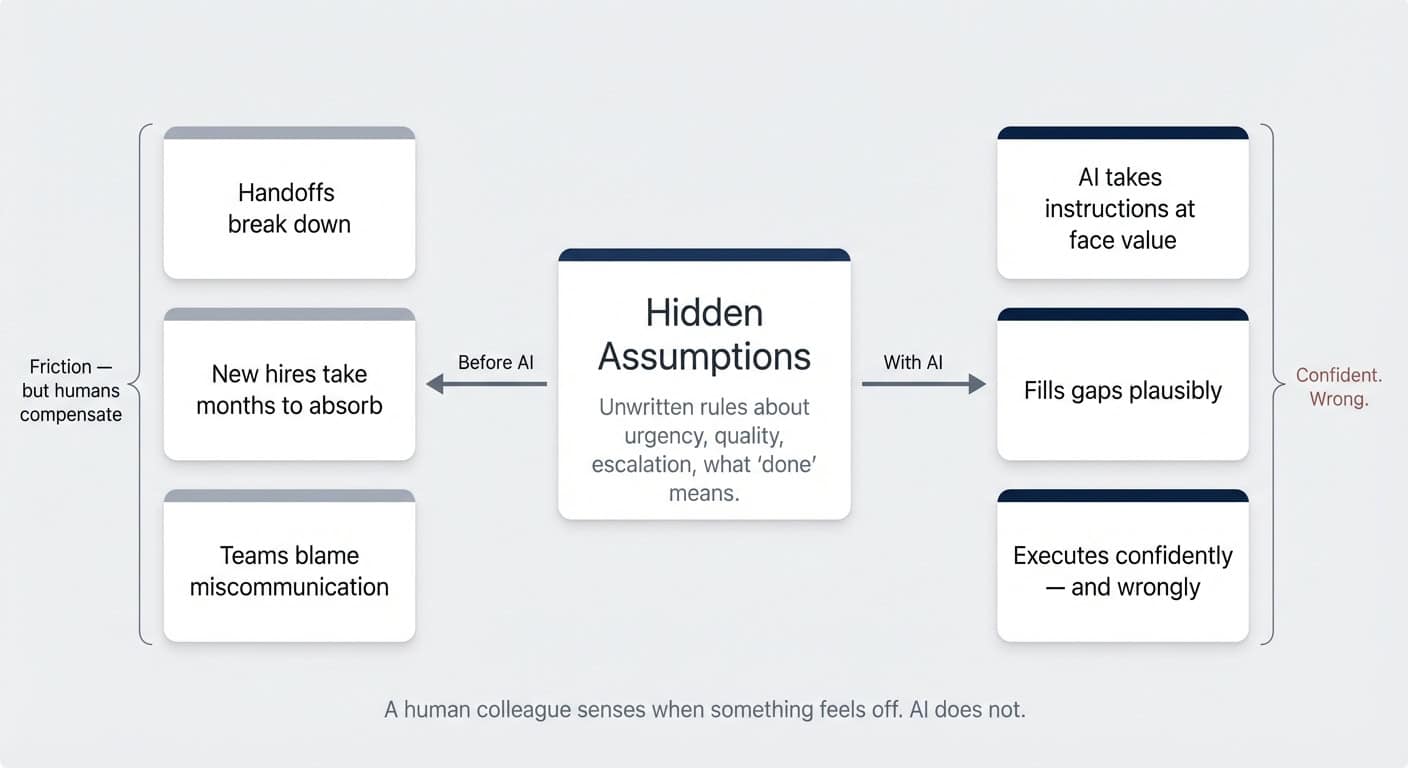

Every team accumulates what we call Hidden Assumptions — unwritten rules about what “urgent” means, which customers get special treatment, when to escalate versus handle independently, what “done” actually looks like.

They rarely get documented because they feel obvious to the people who hold them.

Before AI, hidden assumptions created friction.

Handoffs broke down.

New hires took months to absorb tribal knowledge.

Teams blamed miscommunication when the real problem was that the assumptions had never been spoken out loud.

This is not a new problem.

Knowledge management has been chasing it for decades.

Wikis, SOPs, knowledge bases, documentation sprints — entire industries built around capturing what people know.

Most of it gathered dust. The people who held the knowledge were too busy using it to stop and document it. The incentive was never strong enough to change that.

AI makes it much worse.

A human colleague senses when something feels off.

They pick up on context clues, ask clarifying questions, notice when an instruction contradicts past practice.

It’s not efficient (better documentation would be nice here as well), but it’s kind of how most companies operate – so there’s less pressure to innovate.

AI does none of this.

It takes instructions at face value and executes with confidence. If your hidden assumptions are not encoded, AI does not know they exist.

It fills the gaps. Plausibly.

Confident.

Wrong.

Pouring Cement Over Invisible Structures

The work that matters most happens before AI enters the picture. Extracting how a business actually makes decisions — that is where the value lives.

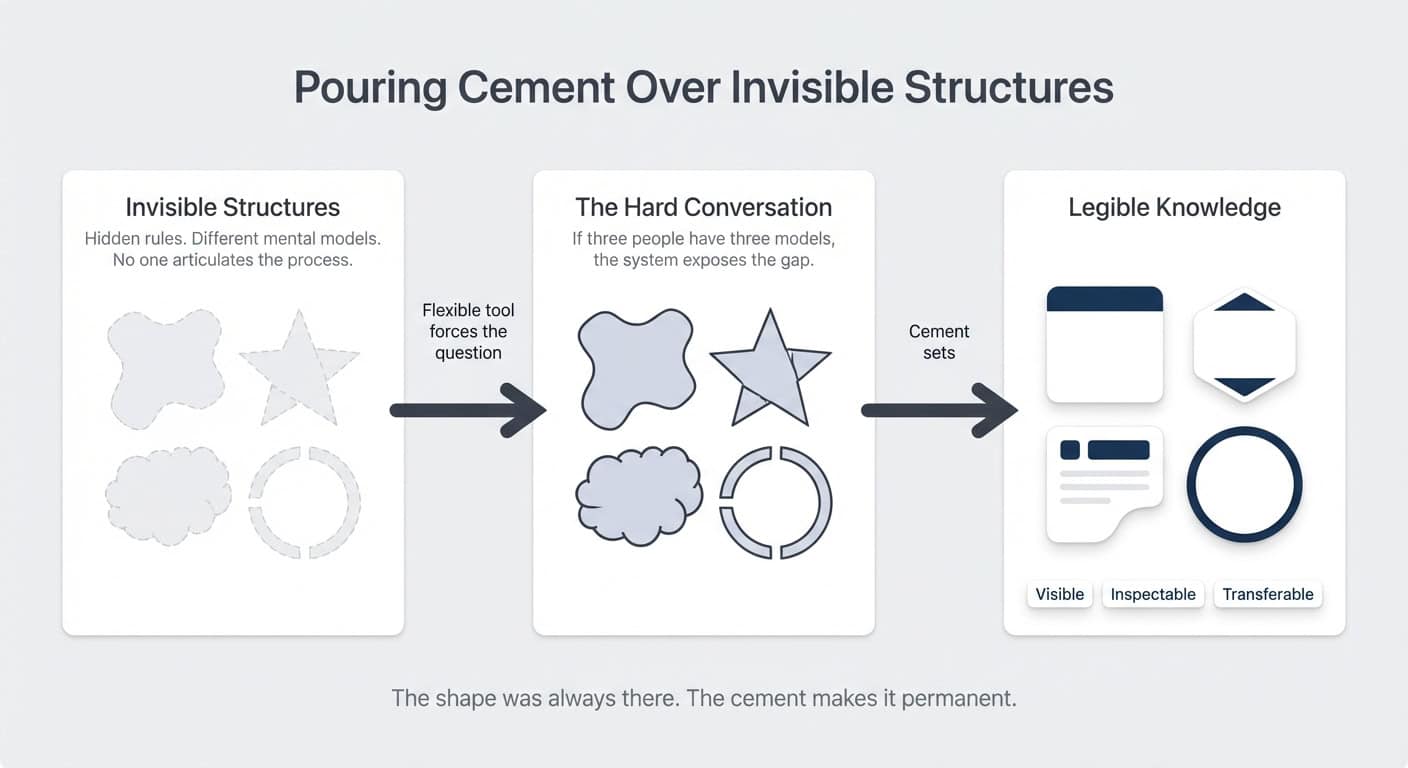

Think of hidden assumptions as invisible sculptures.

They have real shape.

They determine how work actually flows.

But because they are invisible, different people construct different mental models of what they are.

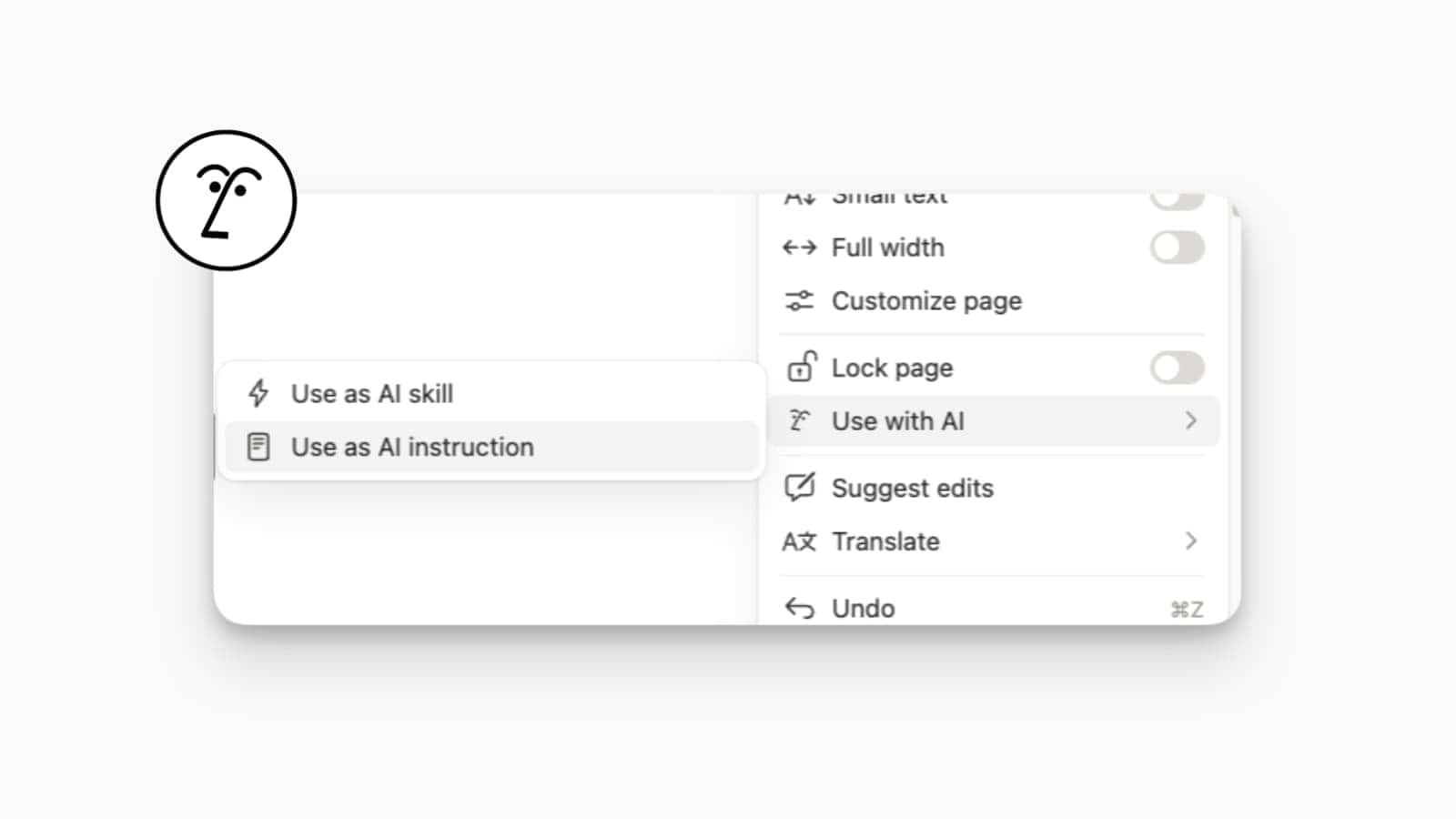

Funnily enough, Notion is actually really good at bringing these invisible, hidden assumptions into the open.

When a system is rigid — when a tool can only represent 70 percent of how you actually work — everyone operates under a shared excuse.

“We do it differently, but the tool cannot capture it.”

No forcing function to have the hard conversation. The remaining 30 percent stays invisible.

The tool’s limitations become a hiding place for unwritten rules.

When a system is flexible enough to match exactly how you think work should flow, something uncomfortable happens.

You have to decide.

If you want the system to reflect your process, you have to articulate what your process actually is.

If three people have three different mental models, a flexible system will not hide the gap.

It will expose it.

We call this pouring cement over invisible structures.

The shape was always there — running the business, guiding decisions, determining outcomes.

The cement makes it visible.

Permanent.

Inspectable.

Transferable.

For many teams, this is the first real win. Not the software.

The conversation the software forced them to have.

For the first time, they answered a question most organisations never ask:

How do we actually want this to work?

AI Demands What Flexible Tools Suggest

Flexible tools like Notion invite articulation.

AI demands it.

A well-designed workspace still functions with incomplete decisions. You can leave gaps. Rely on workarounds. Fill blanks with tribal knowledge. The system tolerates ambiguity.

AI does not.

It takes your instructions at face value.

Ambiguity? It guesses.

Edge cases you never articulated? It handles them however it sees fit.

That 30 percent you never discussed? AI makes those calls for you. Confidently. Consistently. Often wrongly.

(Think of it as the difference between hiring someone who asks questions on their first day versus someone who just… starts doing things. The AI is the second one. Every time.)

This is what happened with the dispatch owner.

His system looked complete.

Databases, properties, views.

But the decision logic — the actual intelligence that made dispatch work — was nowhere in the system.

The AI did not know to ask. It just acted.

The Atrophied Muscle

If articulating your own judgment is this valuable, why do so few people do it naturally?

Because for most of work history, there was no point.

Before flexible tools, before AI that could act on your instructions, shaping your own system was a fantasy.

Unless you wrote code, you had no real influence over the tools you used. You adapted to the tool.

The main skill of a knowledge worker was how well you could follow fixed workflows within a given system.

The muscle of articulating how you want to work — of making preferences and logic explicit — never got exercised.

Same for transferring tacit knowledge.

Explaining what you know, step by step, so someone else can replicate it? Rare skill.

Great trainers are in demand because most experts cannot teach what they do. But you only develop this ability if your job requires teaching. For many roles, it never does.

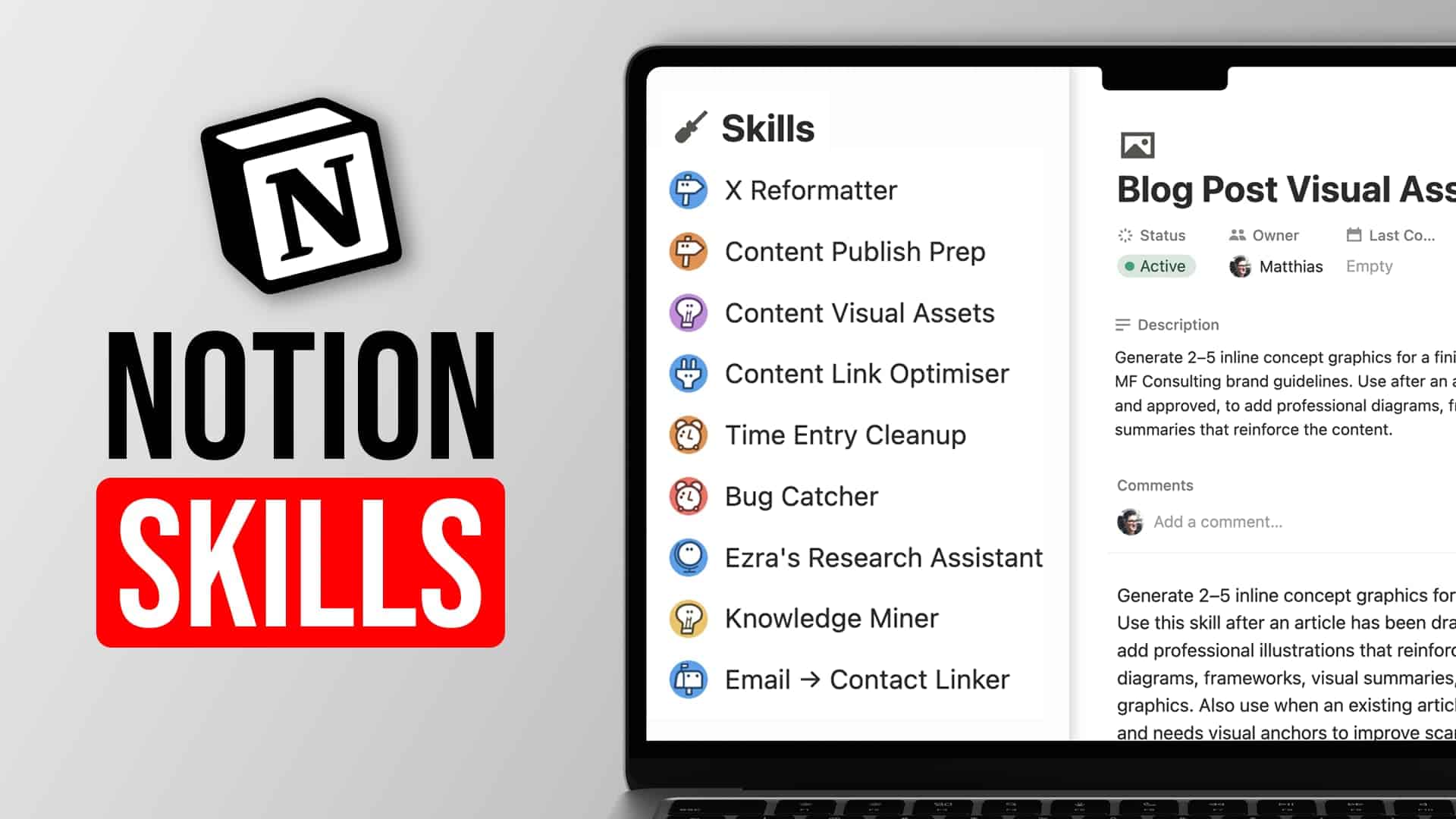

AI changes the frequency. Every interaction with an agent is a training moment. Every prompt is an opportunity to transfer knowledge — or to fail at it.

The demand for this muscle is no longer occasional. It is constant.

This is why the work of extraction matters so much.

Not just for AI readiness.

For organisational clarity.

The teams that go through this process end up with better operations, clearer communication, and faster onboarding — because the invisible has been made visible.

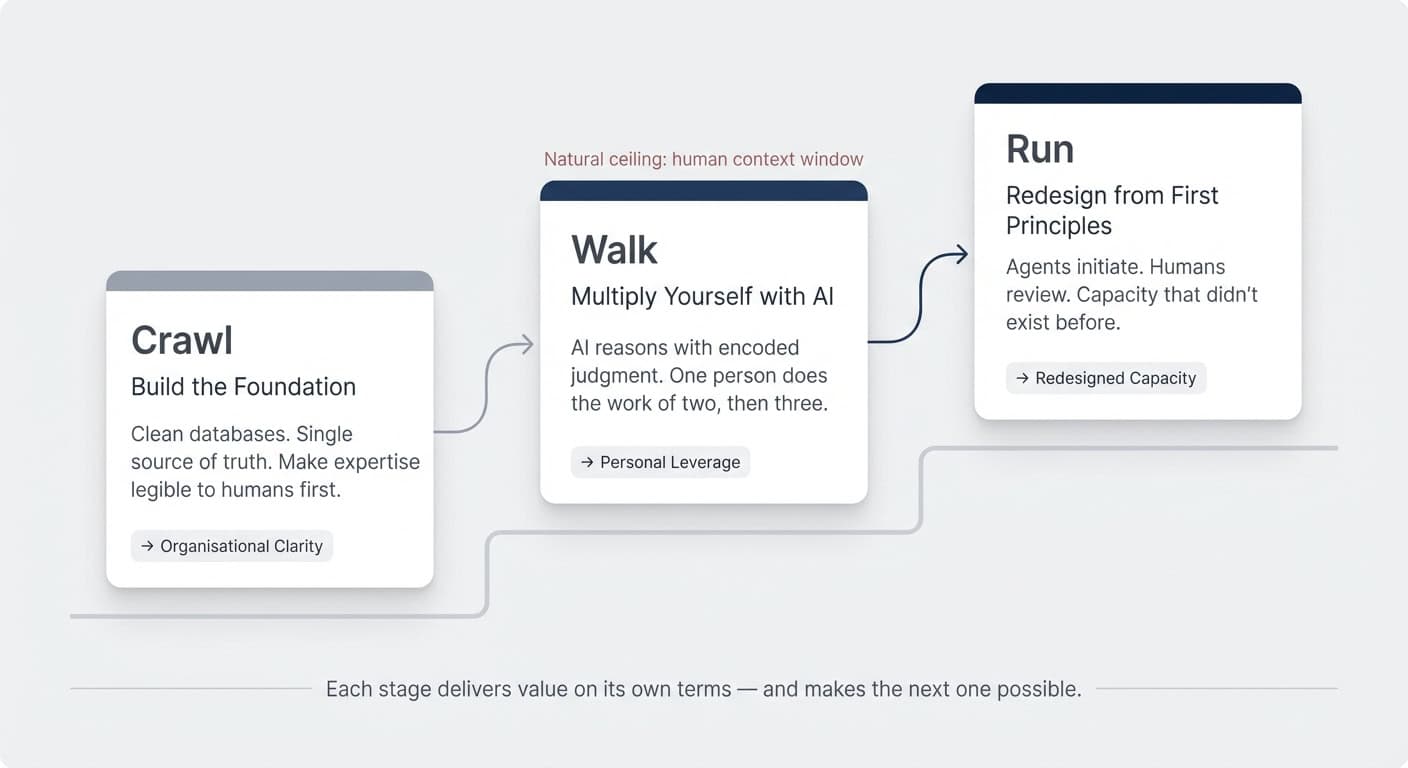

Crawl, Walk, Run

So how do you actually work on this in practice?

How do you build this muscle?

And how do you turn hidden assumptions into documented knowledge?

Let’s take a look at this through the lens of one of my favourite frameworks: crawl, walk, run.

Each builds on the last.

(and no, you can’t just skip to the end)

Before AI can reason about your business, your business needs to be legible to humans.

Clean databases.

A single source of truth.

Scalable best practices.

This is the architecture work that has been at the core of everything we do for years. It is not glamorous. It is the ground everything else stands on.

Nothing that comes after works without this.

Not AI assistance.

Not agents.

Not automation.

Every shortcut that skips the foundation ends up back here eventually — just with more mess to clean up.

Once the foundation is solid, AI starts pulling its weight.

It can answer “Which customers are at risk?” — because “at risk” has been defined.

No service in 90 days, plus a previous complaint, plus no response to the last outreach.

The AI could retrieve data before. Now it can reason, because the judgment is legible enough for it to use.

This is where most “AI-forward” teams are today. Individual productivity goes up. The organisation starts to see what is possible. One person does the work of two. Then three.

And then you hit the ceiling.

The models keep getting better.

The tools keep getting faster.

But the human at the centre of each workflow does not scale.

There are only so many AI-assisted tasks you can stack onto one person’s plate before they become the bottleneck — not the AI, not the system, the human context window itself.

I am feeling this more and more in my own day.

I can unironically say: today, I’m 10x more productive that before AI. Easily.

But I don’t think my own context window is big enough to support a jump to 20x, 30x or 100x.

(and we all know that once 10x becomes the norm not the outlier, this is where we want to go next)

Walk is valuable. It remains valuable. But it has a natural limit: the capacity of the person running the show.

This is where the question changes.

Crawl and Walk both assume the human is the actor and AI is the tool. Run asks: does it have to be that way?

A word on what “first principles” means here — because it is easy to hear “redesign” and think “start over.”

That is not what this is.

You are not burning down the data architecture you built in Crawl.

You are not telling the people who multiplied themselves in Walk that their work was a warmup.

You are taking the same building blocks — the clean data, the documented logic, the legible expertise — and recomposing them.

Instead of asking “how can AI help me do this faster?”, you ask “if I were designing this process from scratch, knowing that both humans and AI are available, who should do what?”

Sometimes the answer is: the agent initiates, the human finishes.

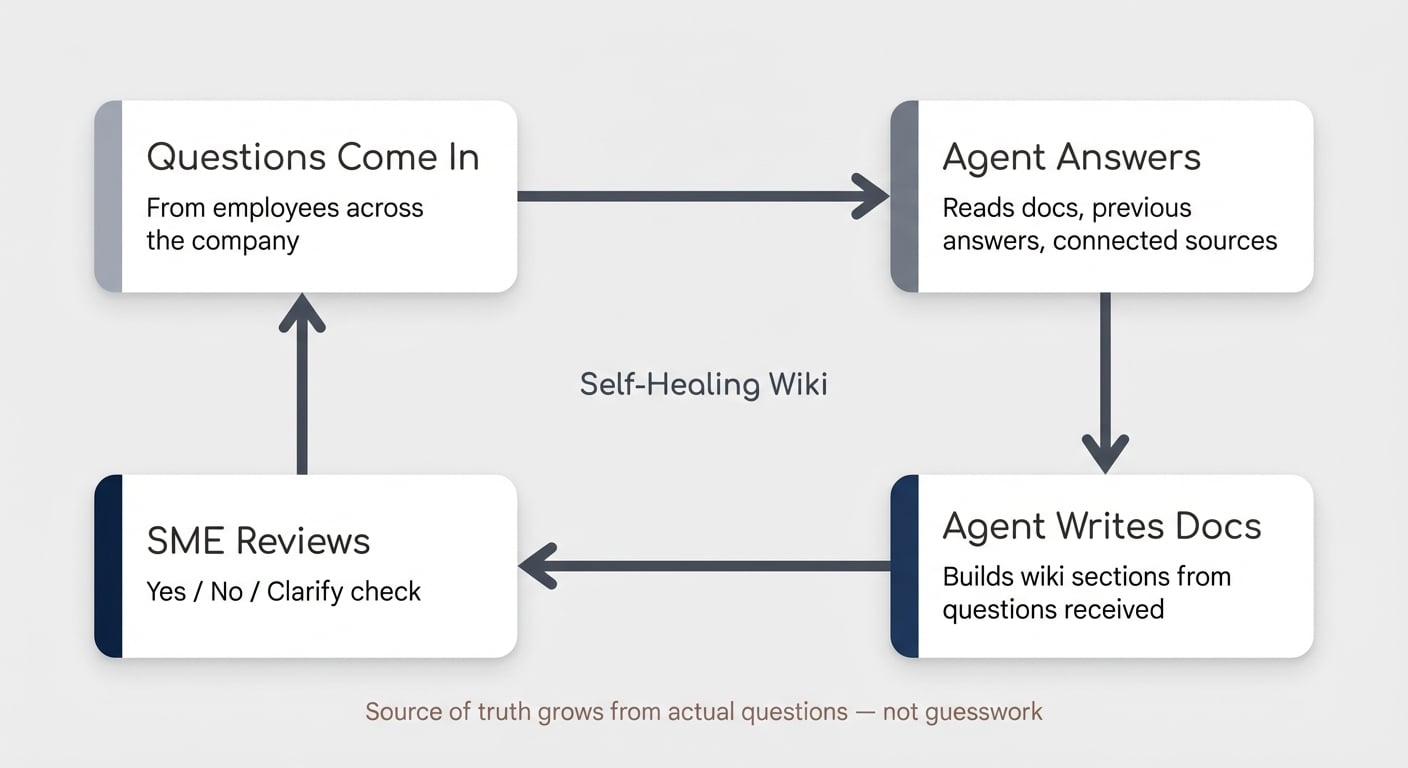

An agent runs Monday morning. Reviews the week’s jobs. Checks certifications. Flags conflicts. Identifies relationship accounts. Drafts a proposed schedule. The dispatcher reviews, adjusts, approves. What took two hours takes fifteen minutes.

Sometimes the answer is: the agent runs the entire loop, and a human spot-checks. A reconciliation process that used to require a full-time person now runs autonomously, flagging only the exceptions that require judgment.

The agent does not replace the human. It takes over the parts that were always execution, never judgment — the parts that only stayed with humans because there was no alternative.

Crawl makes your expertise legible. Walk proves that legibility has value. Run asks: now that the knowledge is legible, does a human still need to be the one running it?

Most teams try to run before they crawl.

They want the agent.

They skip the foundation.

The output is garbage — not because the AI is bad, but because the system was never legible enough for AI to work with.

The value compounds at each stage.

Crawl gives you organisational clarity even without AI.

Walk gives you personal leverage.

Run gives you capacity that did not exist before.

And the person guiding a business through all three becomes the architect of how it makes decisions.

Why Now

Two things changed in the same window.

AI got good enough to act on structured knowledge.

And flexible tools like Notion got good enough to capture it.

For the first time, the full loop works: extract judgment, encode it in a system, let AI reason with it, let agents act on it.

This was not possible three years ago. The models could not reason reliably. The tools could not bend to match how a business actually operates. Both constraints lifted at once.

The teams that move now build a compounding advantage.

Every week of encoded judgment makes the system smarter.

Every agent deployment reveals new assumptions worth extracting.

Every iteration widens the gap between organisations that have made their expertise legible and those still running on reflexes and Slack threads.

The teams that wait accumulate a different kind of debt.

Their best people’s knowledge stays locked in formats only humans can run. Their processes stay invisible.

And every competitor who does the extraction work first sets a pace that gets harder to match.

The Decision Architect

Nobody has this fully figured out.

The technology is barely eighteen months old. There are no playbooks with five years of proof behind them.

That is exactly the point.

The organisations willing to experiment — to build while the rules are still being written — are the ones who will define how this works.

The foundation you build in Crawl does not get thrown away when you reach Walk.

The leverage you gain in Walk does not become irrelevant when you reach Run.

Each stage makes the next one possible — and each stage delivers value on its own terms.

The question is never “should we start over?” It is always “what are we ready for next?”

We are in this every day. Building these systems with our clients. Experimenting in our own business. Publishing what we learn.

The playbook is being written one engagement at a time.

If your team is ready to start making its expertise legible — whether you are building the foundation, multiplying your team, or redesigning how the work gets done — we are here to build it with you.